In traditional industrial and manufacturing environments, monitoring worker safety, enhancing operator efficiency, and improving quality inspection were physical tasks. Today, AI-enabled machine vision technologies replace many of these inefficient, labor-intensive operations for greater reliability, safety, and efficiency. This article explores how, by deploying AI smart cameras, further performance improvements are possible since the data used to empower AI machine vision comes from the camera itself.

AI-enabled machine vision

In 2020, the AI machine vision market size for manufacturing and industrial environments was $4.1B, which, according to an IoT Analytics report, is forecast to grow to $15.2B by 2025. That is a compound annual growth rate (CAGR) of 30%, compared to the 6.5% CAGR of traditional machine vision deployments. This high CAGR is because the next generation of real-time Edge AI machine vision is not limited to quality assurance and product inspection applications.

Worker safety is a top priority in manufacturing and industrial settings, and AI-enabled smart cameras help automate monitoring and inspection in these environments. It is essential to ensure protection for employees, contractors, and other third-party operators who work in potentially unsafe environments, such as dangerous mechanical equipment or hazardous materials. Behavior and Position (POSE) detection generates information that indicates whether machine operators are in danger, following standard operating procedures (SOP), or working in ways that enhance productivity and efficiency. Lastly, automated optical inspection (AOI) increases the speed and accuracy of quality control, even for difficult to view products, such as contact lenses.

AI for Smart Worker Safety

Fatalities in industrial settings are not unheard of globally. When evaluating worker safety, facilities must also consider non-fatal work-related injuries. In addition to the emotional trauma, there is often a financial consideration to take into account.

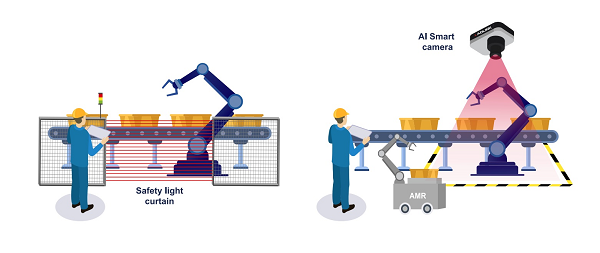

Industrial and manufacturing sites traditionally use human supervision and light curtains for ensuring worker safety. However, human supervisors, who cannot be everywhere and see everything, are fallible and safety light curtains have their inherent limitations.

Geo-fencing

In modern smart factories, people often work in potentially hazardous areas with dangerous equipment, such as robotic arms. Safety light curtains protect personnel from injury by creating a sensing screen that guards machine access points and perimeters; however, they occupy lots of floor space, are difficult to deploy, and lack flexibility. In some instances, the safety light curtain’s limited response time may create additional issues.

Conventional machine vision solutions use IP cameras and AI modules that are flexible and easy to deploy but come with considerable latency and are, therefore, unsuitable for situations requiring an immediate response.

An all-in-one AI smart camera, such as ADLINK’s NEON-2000 series, can address this latency problem. It captures images and performs all AI-related operations before sending results and instructions to related equipment, such as the robotic arm [see figure 1]. Compared to light curtains and conventional machine vision implementations, using an all-in-one smart camera minimizes delays, reduces space and bandwidth requirements, and is easy to install and maintain.

Real-time machine vision AI offers additional benefits to augment worker safety by alerting users if they enter an unsafe zone and logging that information for retraining purposes. After logging that data from past events, it can be helpful in the future. For example, if a worker approaches a hazardous area, instead of the robotic arm shutting down completely, it could go into a functional safety process loop. Routines such as these not only improve worker safety but also increase the factory’s operating efficiency.

Smart Refueling

When a fuel truck arrives at a manufacturing facility, it introduces the potential for multiple safety issues that can easily be resolved by smart AI vision. First of all, the truck may roll if the brake is not applied correctly or fails. Training the AI machine vision system to monitor the truck for movement enables it to raise an immediate alarm should the status change.

Facilities must also consider the location of operators during the refueling process, as there are different types of zoning breaches. It is critical to ensure that all workers on-site understand that there are safety risks. For example, it is necessary to place traffic cones at the four corners of the truck and ensure that the operative refueling the truck is wearing the appropriate PPE – AI smart vision can perform all of these safety checks to confirm procedures are met correctly [see figure 2].

Immediate alerts from the AI machine vision system can warn operators of a safety breach and prevent injury. It also creates accountability; if someone enters an unsafe zone without the appropriate PPE, the logged images can flag errors and educate employees to prevent future mistakes.

POSE Detection

For the manufacturing industry, ‘cycle time’ is a critical performance index for production efficiency. It represents the amount of time a team spends producing an item until the product is ready for shipment. Monitoring employee behavior and position with AI smart camera technology helps enforce SOP and improve worker efficiency, reducing cycle time.

Figure 3: POSE detection on an electronics manufacturing line can help increase productivity, as well as improve order, quantity, and line balance.

POSE detection from live video plays a critical role, enabling the overlay of digital content and information on top of the analog world. POSE describes the body’s position and movement with a set of skeletal landmark points, such as a hand, elbow or shoulder.

AI machine vision enables factory operators and workers to focus on how physical positions affect their work. POSE data is a great training tool for guidance on where operators should place their arms and hands to work more ergonomically and efficiently; it also betters their posture, which is another significant advantage [see figure 3].

Tracking whether an operator is present at their workstation on the production line also automates and verifies timesheets. Monitoring that they are actively following the SOP, ensures quality control and line balancing.

AI Smart AOI

Manual product quality inspection is time-consuming, inconsistent and can ultimately create bottlenecks in the production line. Conventional AOI machine vision can detect easy-to-find defects faster than professional quality control staff due its exceptional accuracy and efficiency. However, when a fault is difficult to detect, such as a flaw on a contact lens for example, these machine vision systems reach their limits in terms and accuracy and consistency.

While most manufacturers randomly sample test products for flaws, this is not possible on contact lens production lines as every lens needs inspecting. Quality control staff can only view up to 4,000 lenses per shift, creating production bottlenecks. Additionally, false discovery rates and missed detections are inevitable.

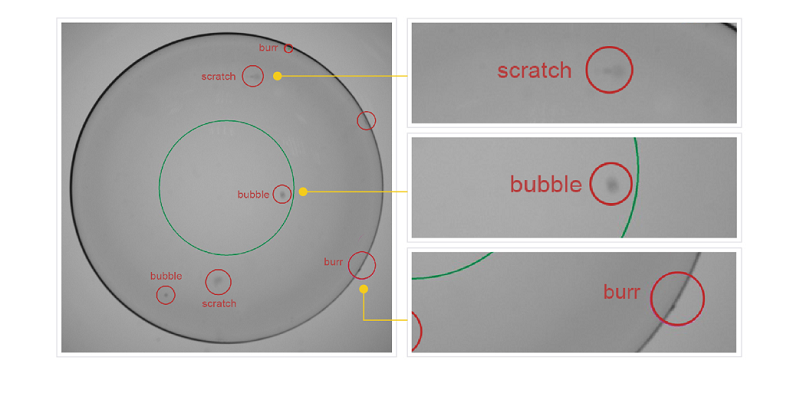

As contact lenses are transparent, implementing machine vision-based detection has historically been a challenge for the industry. Conventional AOI relies on fixed geometric algorithms to discover defects, but acquiring quality images from transparent objects is challenging, which results in unacceptable detection performance.

Collecting data using AI smart cameras to train the AI algorithms and iterate on inspection performance gains provides a more favorable solution. The AI smart system can identify the most common defects, including burrs, bubbles, edges, particles, scratches, and more [see figure 4], and maintain inspection logs for customer reference

Figure 4: AI smart AOI can detect even minute defects in transparent contact lenses, significantly improving inspection rates compared to the previously used manual quality control processes

Each AI smart camera can inspect 50x more lenses than manual visual inspection, with accuracy improvements from 30% to up to 95%.

Conclusion

Manufacturers that leverage robust, real-time data from AI machine vision technologies can increase uptime, leverage preventative maintenance, enhance productivity and worker safety, and a host of other workplace benefits.

The AI machine vision applications highlighted in this article require AI algorithms for deep learning. The software experts that develop AI algorithms need a smart, reliable platform for executing AI model inferencing. AI smart cameras with pre-installed Edge Vision Analytics (EVA) software address many issues common to conventional AI vision systems, improve compatibility, speed up installation, and minimize maintenance issues.

Chia-Wei Yang, Director, Business and Product Center, IoT Solution and Technology Business Unit, ADLINK

To deploy an AI vision project successfully, it may take engineers as long as 12 weeks to conduct a PoC (proof of concept). It takes considerable time to overcome the learning curve of choosing optimized cameras and the AI inference engine, retraining AI models, and optimizing video streams. However, EVA software simplifies all these steps with its pipeline structure and shortens the PoC time to up to 2 weeks, serving as an excellent starting point to kick-start the AI vision project.