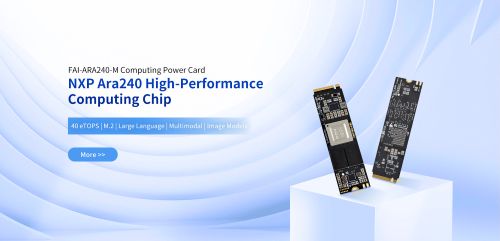

The rapid evolution of Large Language Models (LLMs) and Multimodal AI is pushing the limits of traditional edge computing. To address the growing demand for high-performance AI inference without the complexity of a full system redesign, Forlinx Embedded, a proud NXP Gold Partner, is excited to announce the global launch of the FAI-ARA240-M Edge AI Accelerator.

The Challenge: Scaling AI Performance at the Edge

As AI workloads move from simple object detection to complex Generative AI—such as Vision-Language Models (VLMs) and Vision-Language-Action (VLA) models—developers face a significant hurdle. Traditional embedded SoCs may not always provide the dedicated NPU power required for real-time, local inference.

Until now, upgrading AI performance often meant redesigning the entire hardware stack, leading to increased costs, longer development cycles, and higher project risks.

The Solution: A Decoupled AI Architecture

The FAI-ARA240-M introduces a “Decoupled AI Architecture.” By separating AI acceleration from the host SoC, Forlinx allows developers to scale their system’s “brainpower” independently.

Designed in a standard M.2 2280 form factor, the FAI-ARA240-M acts as a powerful co-processor. It can be easily integrated into existing platforms—such as those based on the NXP i.MX8M Plus or the next-generation i.MX95—via a simple M.2 M-Key interface. This modular approach significantly reduces time-to-market and allows legacy systems to evolve into AI-capable powerhouses.

Key Technical Highlights

| Feature | Specification |

| Processor | NXP Ara240 Discrete Neural Processing Unit (DNPU) |

| AI Performance | 40 eTOPS (equivalent TOPS) |

| Memory | 8GB / 16GB LPDDR4 options |

| Form Factor | M.2 2280 (Standard M.2 M-Key) |

| Interface | PCIe Gen4 x4 / USB 3.2 Gen1 |

| Host Support | Optimized for NXP i.MX8M Plus, i.MX95, and more |

| OS Support | Linux, Windows |

Advanced Model Support: From Vision to Action

The FAI-ARA240-M is not just about raw numbers; it is about versatility. It supports a wide range of modern AI frameworks and architectures, enabling the next wave of industrial innovation:

- Generative AI: Real-time inference for LLMs and MMLMs.

- Multimodal Intelligence: Seamless handling of VLMs for complex environmental understanding.

- Robotic Interaction: Supporting VLA (Vision-Language-Action) models to bridge the gap between perception and physical execution.

- Mainstream Frameworks: Full compatibility with TensorFlow, PyTorch, and ONNX.

Built for the Toughest Environments

Forlinx Embedded understands that industrial applications demand more than just performance. The FAI-ARA240-M has undergone rigorous environmental testing to ensure reliable 24/7 operation. Its low-power design and optimized thermal architecture make it ideal for fanless, rugged systems where heat dissipation is a critical concern.

Target Applications

- Industrial Automation: Real-time defect inspection and multimodal sensory fusion.

- Healthcare: High-speed medical imaging and diagnostic assistance.

- Smart Transportation: Edge-based traffic analytics and autonomous navigation.

- Robotics: Drone and robotic systems requiring secure, on-device AI for critical operations.

The FAI-ARA240-M Edge AI Accelerator is available for order now. For more information, please visit the FAI-ARA240-M Product Page or contact our global sales team at sales@forlinx.com.